When the Fed and Treasury call an emergency meeting, listen

On April 8, 2026, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell did something almost without precedent: they summoned the CEOs of Citigroup, Goldman Sachs, Bank of America, Morgan Stanley, and Wells Fargo to an emergency session at Treasury headquarters in Washington.

The subject: Anthropic’s new AI model, Claude Mythos Preview — and what it means for the security of the global financial system.

The meeting was focused on a new AI model so capable at finding vulnerabilities that Anthropic says it can only be released to a limited number of carefully chosen parties.

This signals that policymakers now view AI-driven cyber risk as a financial stability concern — not just a technology problem.

This is not a future risk. This is a structural shift that is already underway.

What Mythos actually revealed

Anthropic’s researchers say Mythos Preview was able to detect thousands of high- and critical-severity bugs and software defects, with vulnerabilities identified in most major operating systems and browsers.

But the more significant finding wasn’t the volume — it was the nature of the capability.

The model can single-handedly perform complex, effective hacking tasks, including identifying multiple undisclosed vulnerabilities, writing code that can hack them, and chaining those together to form a way to penetrate complex software.

Among the discoveries: a vulnerability in OpenBSD that had escaped detection for 27 years, and a flaw in the video encoder FFmpeg that had escaped detection in 5 million previous automated tests.

Critically, Anthropic did not explicitly train Mythos Preview to have these capabilities. Rather, they emerged as a downstream consequence of general improvements in code, reasoning, and autonomy.

This matters enormously. These capabilities didn’t require deliberate offensive engineering. They emerged naturally from general intelligence improvements. Every future model will have them too.

The floodgates were already opening — Mythos is acceleration

Before Mythos, the vulnerability landscape was already deteriorating faster than most security teams acknowledged.

48,174 new CVEs were published in 2025 — one of the highest annual totals ever recorded. That is 131 vulnerabilities disclosed every day.

CVE volumes are up 20% from 2024 and 66% from 2023. If trends continue, the number of CVEs in 2026 could reach anywhere from 57,600 to 79,680.

This is the pre-Mythos baseline.

Now consider what Mythos-class AI adds: vulnerabilities discovered continuously across every major operating system and web browser, with capabilities that frontier AI models will share — potentially beyond actors committed to deploying them safely.

The floodgates didn’t open on April 7. They were already cracking. Mythos blew them off their hinges.

Why this breaks detection — the math is unforgiving

Every vulnerability is not just a bug — it is an entry point, a delivery mechanism for new threats.

It is a road for previously unseen threats to enter your environment.

Until recently, those roads were narrow — limited by human discovery, manual exploitation, and time.

AI has turned them into multi-lane highways — increasing both the number of entry points and the speed at which new threats arrive.

And once those highways exist, the traffic doesn’t stop.

And you don’t control what’s coming through.

Mythos-class AI doesn’t just find vulnerabilities — it chains them together autonomously, creating multi-stage exploit paths that combine multiple unknown flaws into a single, coordinated attack.

This industrializes the delivery of new threats — at a scale detection was never designed to handle.

The math makes this structural, not cyclical:

New threat generation rate → exponential Detection classification rate → bounded

Detection coverage = Known / Total → asymptotically → 0

The data confirms this is already happening:

Zero-day exploitation rose 46% in the first half of 2025. 32.1% of newly exploited CVEs showed exploitation on or before the day of public disclosure — making them effectively zero-day attacks by definition.

Attacks targeting website vulnerabilities increased 56% year-over-year, reaching 6.29 billion attacks in 2025.

Detection is not failing because it is bad. It is failing because the problem space is becoming mathematically unbounded.

Patching is necessary — but it has lost the race

There is a growing narrative that “patching is dead.” That’s an overstatement. Patching still matters. But the data shows it can no longer restore a secure state on its own.

The median time to exploit a vulnerability is now under 5 days.

In 2020, attackers took an average of 30 days to exploit a vulnerability after CVE disclosure. By 2025, 28% of vulnerabilities were exploited within one day.

For many sectors, the median time to close half of internet-facing vulnerabilities is around 361 days. Healthcare averages 519 days; education 577 days — while exploitation often lands within zero to five days.

The gap between vulnerability disclosure and exploitation has collapsed from weeks to hours. The gap between exploitation and patching remains measured in months. 32% of identified vulnerabilities remained unpatched for more than 180 days.

This is not a process failure. It is a structural mismatch between attacker velocity and defender capacity — one that AI has now made permanent.

And AI is widening that mismatch every day.

What vulnerabilities actually represent now

Before AI-assisted exploitation, a vulnerability was a bug that required significant skill to weaponize. The discovery-to-exploit chain was long enough that defenders had meaningful time to respond.

That is no longer true.

Today, a vulnerability is best understood as:

An unlock mechanism for previously unseen threat delivery.

Once a vulnerability exists, AI can:

- Discover it autonomously

- Generate a working exploit

- Chain it with other vulnerabilities for deeper access

- Deliver a threat your detection has never classified

The fallout — for economies, public safety, and national security — could be severe, Anthropic warned. Mythos presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.

And those capabilities will proliferate. They will not stay exclusive to responsible actors.

The real question security leaders must answer

Most security architectures still ask: “Can we detect the threat?”

Given the math above, that is the wrong question.

The right question is: “What happens when a previously unseen threat executes — regardless of how it arrived or whether you recognized it?”

Because in an environment with 131 new CVEs per day, exploitation measured in hours, patching measured in months, and AI-generated exploit chains that no prior signature has ever seen — previously unseen threat execution is not an edge case. It is a baseline condition.

The required shift: from trying to stop threats… to ensuring they can’t cause damage

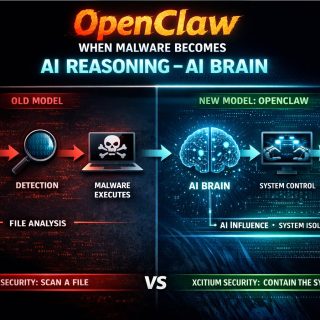

Traditional security architecture:

Prevent → Detect → Respond → Patch

This model worked when the threat landscape was bounded. It assumes that enough threats can be recognized in advance to make detection viable as a primary control.

That assumption is now broken.

The new required model:

Assume previously unseen threat execution → Virtualize at runtime → Preserve system integrity regardless of delivery mechanism

This means security must become invariant to:

- How the threat arrived (which vulnerability it used)

- What the threat looks like (seen before or never seen)

- Whether detection fired (it may not have)

The Xcitium approach — built for this reality before it had a name

At Xcitium, we built our architecture on this reality years before it had a name:

Previously unseen threats will execute. Detection will not catch all of them. The system must remain safe anyway.

This is not a reactive posture. It is the logical conclusion of the math above — a conclusion we reached long before Mythos made it undeniable to regulators.

Our ZeroDwell Containment technology:

- Automatically isolates every unknown and untrusted file at the kernel level — regardless of which vulnerability delivered it, which exploit chain was used, or what the threat contains

- Virtualizes execution away from the actual system, allowing the process to run inside isolation with no ability to cause damage

- Operates independently of detection — virtualization at runtime is deterministic, not probabilistic

The result: previously unseen threats that execute inside our environment cannot cause damage. Not because we recognized them. Because we virtualize everything we haven’t explicitly verified as trusted.

Proven at the scale the problem demands

Every day, Xcitium processes billions of previously unclassified files and objects — files that no detection engine has ever seen before.

Every day, those files attempt to execute.

Every day, they are isolated — without needing to be recognized.

While the industry was building faster detection, we were solving a different problem: how do you keep a system safe when you cannot possibly know every threat in advance?

The answer is deterministic virtualization at the point of execution.

Five years of historical transparency is the best proof you can get in Cybersecurity!

Final thought

The Powell-Bessent emergency meeting was not about a specific attack. It was about a structural shift: AI has permanently altered the ratio between how fast previously unseen threats can be created and how fast defenders can recognize them.

Frontier AI capabilities are likely to advance substantially over just the next few months. This is not stabilizing. It is accelerating.

AI didn’t just increase cyber risk — it removed the limits that made detection viable.

The future of cybersecurity is not about recognizing every threat.

It’s about making sure that even when you don’t — nothing gets compromised.

https://www.nbcnews.com/tech/security/anthropic-project-glasswing-mythos-preview-claude-gets-limited-release-rcna267234

https://www.platformer.news/anthropic-mythos-cybersecurity-risk-experts/

https://thehackernews.com/2026/04/anthropics-claude-mythos-finds.html

https://securityboulevard.com/2026/03/46-vulnerability-statistics-2026-key-trends-in-discovery-exploitation-and-risk/

https://www.anthropic.com/glasswing

When the Fed and Treasury called an emergency meeting over AI-generated threats, the problem they were describing was simple: AI now creates threats faster than detection can classify them. Xcitium solves that by virtualizing every unrecognized file at execution — so even threats no one has ever seen before cannot cause damage.